The initial novelty of generative video has begun to fade, replaced by the more demanding requirements of professional production. For content teams and individual creators, the “slot machine” approach of text-to-video—where a prompt is entered and a random, often unusable, result is returned—is no longer sufficient. The industry is pivoting toward more deliberate, operator-led workflows. At the heart of this shift is the transition from static source images to controlled motion, a process that prioritizes composition and intent over algorithmic luck.

Using a static image as the foundation for video production provides a “ground truth” that text prompts lack. It allows the creator to lock in character design, lighting, and environmental layout before a single frame of motion is rendered. However, translating that static frame into a reliable video output requires an understanding of how motion engines interpret pixels and where the technical friction points lie.

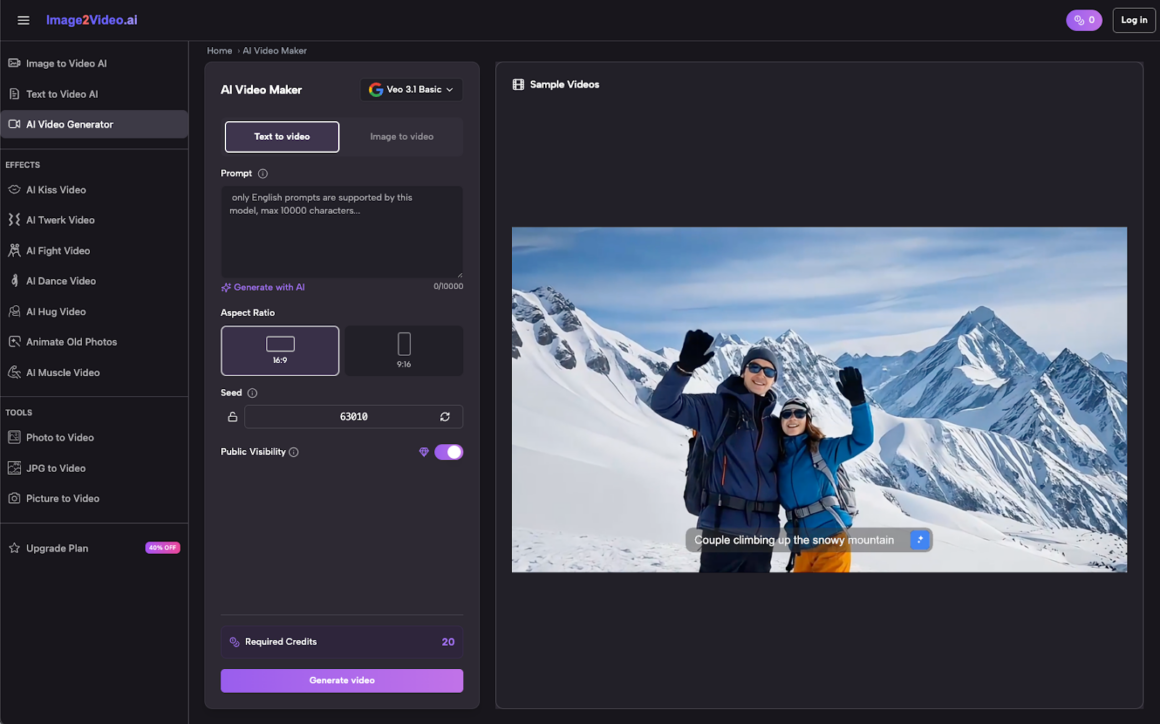

The Strategic Shift to Image-to-Video

Text-to-video models often struggle with “spatial coherence.” If you ask a model to generate a video of a specific person in a specific kitchen, the model has to simultaneously invent the person, the kitchen, and the movement. This frequently leads to subjects that morph mid-scene or environments that warp inconsistently.

By starting with a high-quality source image, the creator offloads the “invention” task to a static generator or a curated photograph. This narrows the AI’s responsibility to a single dimension: temporal consistency. When utilizing Image to Video AI, the workflow changes from creative discovery to technical execution. The goal is no longer to see what the AI can make, but to dictate how the existing image should behave over time.

This approach is particularly effective in marketing and social media, where brand consistency is non-negotiable. A product photo must remain recognizable; it cannot sprout extra buttons or change its label halfway through a five-second clip. Image to Video workflows provide the necessary guardrails for these professional standards.

Anatomical Integrity and Motion Vectors

One of the primary challenges in the Photo to Video transition is maintaining the structural integrity of the subject. AI models operate by predicting the next most likely arrangement of pixels. Without a clear understanding of physics or skeletal structure, the model may interpret a walking motion as a series of pixel-smears rather than the movement of limbs.

To mitigate this, operators must consider the “motion potential” of their source image. An image with a clear silhouette and distinct separation between the foreground and background is significantly easier for the AI to animate. When the subject is cluttered or the lighting is flat, the motion engine may struggle to identify which pixels belong to the character and which belong to the scenery, leading to the dreaded “melting” effect where the subject appears to fuse with their surroundings.

It is worth noting a significant limitation here: current models still struggle with high-velocity or complex movements. A simple head turn or a gentle breeze through hair is usually handled with high fidelity. However, complex actions like tying a shoelace or performing a backflip often result in anatomical “hallucinations” where limbs appear and disappear. Expectation management is key; the technology is currently optimized for atmospheric and cinemagraph-style motion rather than complex choreography.

The Mechanics of Photo to Video AI

Modern systems like Photo to Video AI utilize latent diffusion models that have been fine-tuned on video datasets. These models look at the source image and identify “motion latent” areas. When an operator provides a prompt alongside the image, they are essentially providing a vector for that motion.

For example, if the source image is a mountain landscape and the prompt is “slow pan right with clouds moving,” the AI doesn’t just “move” the image. It generates new pixels that “should” exist based on the perspective shift while maintaining the textures of the original mountain. This is where the choice of tool becomes critical. A robust Image to Video engine will respect the “seed” of the original image, ensuring that the last frame of the video looks like a logical progression of the first.

Practical workflows often involve:

- Image Preparation: Upscaling the source image to the desired output resolution to prevent the AI from “guessing” fine details.

- Depth Mapping: Some advanced workflows involve creating a depth map to tell the AI which objects are close and which are far, though many integrated tools now handle this internally.

- Prompt Weighting: Balancing the text prompt so it describes the motion (e.g., “rippling water,” “cinematic zoom”) rather than redescribing the *subject*.

Controlling the Camera vs. Controlling the Subject

In a traditional film set, there is a distinction between the movement of the camera and the movement of the actors. In the world of Photo to Video, the AI often conflates the two unless explicitly guided.

Camera Motion includes pans, tilts, zooms, and dollies. This is generally the most stable type of motion because it involves a uniform shift of pixels across the frame. Most Image to Video AI tools excel at this, creating a sense of three-dimensionality by slightly shifting the perspective of objects based on their perceived depth.

Subject Motion is far more volatile. This involves the internal movement of elements within the frame—a person waving, a cat jumping, or a car driving. The difficulty here lies in “occlusion.” When a subject moves, they reveal parts of the background that were previously hidden. The AI must “inpaint” these new areas on the fly. If the background is complex, the inpainting might be inconsistent, leading to flickering or “ghosting” artifacts.

Operators should be aware that results are currently inconsistent when trying to combine aggressive camera movement with aggressive subject movement. Often, the most professional-looking results come from choosing one or the other: a moving camera on a relatively still subject, or a static camera on a moving subject.

Managing Technical Uncertainty in Production

Even with the best tools, there is an inherent level of uncertainty in generative video. A prompt that works perfectly for one image may fail on another due to subtle differences in lighting or texture. This is a significant hurdle for content teams used to the predictable timelines of traditional editing.

One must account for a “success rate.” In a professional environment, an operator might need to generate five to ten variations of a single motion sequence to get one that is “broadcast ready.” This isn’t necessarily a failure of the tool, but a reflection of the probabilistic nature of the technology. We are currently in a stage where the AI is a highly talented, yet occasionally erratic, digital puppeteer.

Another limitation involves temporal duration. Most high-fidelity Image to Video outputs are limited to short bursts of 3 to 10 seconds. Attempting to generate longer sequences often leads to “drift,” where the subject’s features slowly change over time. For longer content, the current best practice is to generate multiple short “shots” from different source images and stitch them together in a traditional video editor.

Practical Use Cases: From Social Media to Heritage

Despite the limitations, the practical applications for these workflows are expanding rapidly.

Marketing and E-commerce: Static product shots can be turned into dynamic ads. A watch can have light glinting off its surface; a dress can have a gentle sway. This adds a level of premium feel to a storefront without the cost of a full video production.

Social Media Content: Content creators can use AI to “breathe life” into their digital art or photography. This is particularly useful for platforms that prioritize video content in their algorithms. A single high-quality image can be repurposed into multiple video loops, extending the life of the original asset.

Restoration and Heritage: One of the most emotionally resonant uses of this technology is the animation of old family photos. Taking a static, black-and-white portrait from a century ago and adding a subtle blink or a smile creates a powerful connection to the past. This requires a restrained touch; over-animating heritage photos often leads to an “uncanny valley” effect that feels unnatural or disrespectful to the original subject.

The Path Forward: Toward Physics-Aware Models

The next frontier for Image to Video is the integration of physics-aware engines. Current models are essentially “guessing” what motion looks like based on visual patterns. The next generation of tools will likely incorporate a more fundamental understanding of how objects move in 3D space, how gravity affects fabric, and how light reflects off moving surfaces.

Until then, the burden of control remains with the human operator. By treating the AI as a sophisticated rendering engine rather than a “magic box,” creators can achieve reliable, high-quality outputs that bridge the gap between a single moment and a moving story. The transition from Photo to Video is no longer just about the technology—it is about the engineering of intent. Through careful selection of source images, deliberate motion prompting, and an awareness of current technical boundaries, the potential for creative expression is virtually limitless.