For creative operations leads, the novelty of generative AI wore off months ago. The current challenge isn’t whether a model can generate a high-fidelity person, but whether it can generate the same person across a storyboard, a social ad campaign, and a promotional video. In a professional production pipeline, “close enough” is usually a failure. When a character’s jawline shifts or their outfit changes color between frames, the immersion breaks, and the asset becomes unusable for brand-driven storytelling.

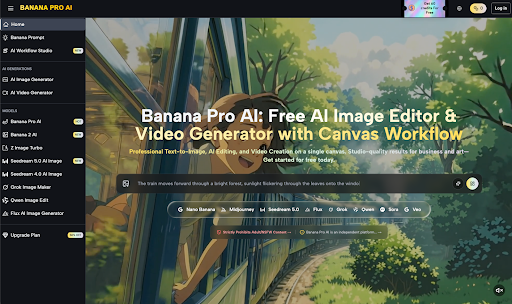

Maintaining subject identity requires moving away from the “slot machine” approach of basic prompting toward a structured workflow. This is where the ecosystem surrounding Banana Pro and its associated models, such as Nano Banana Pro, begins to offer a more disciplined framework for asset creation. By leveraging specific canvas tools and image-to-image logic, teams are finding ways to anchor visual identity even as environments change.

The Drift Problem in Generative Media

Most diffusion models are inherently stochastic. You provide a prompt, and the model navigates a latent space to find a result that fits the description. The problem arises when you need to change the setting or the action while keeping the subject fixed. Standard text-to-image prompts often prioritize the environment or the lighting over the specific facial geometry of a character.

In practice, even highly descriptive prompts like “man with a square jaw and a blue linen shirt” will yield ten different men across ten different generations. For an agency building a repeatable asset pipeline, this lack of control is a bottleneck. To solve this, technical operators have moved toward reference-based workflows. This is where Banana AI provides a functional middle ground, allowing users to feed a primary reference into the engine to serve as a visual anchor.

Anchoring Identity with Nano Banana Pro

The Nano Banana Pro model is frequently cited in creator workflows because of how it handles weights between the prompt and the reference image. When working in an AI Image Editor environment, the goal is to lock the subject’s “seed” characteristics—their facial proportions, hair texture, and distinctive features—while allowing the rest of the scene to remain fluid.

However, a point of limitation that every lead must acknowledge is that no current model offers 100% mathematical consistency without post-production. Even with Nano Banana Pro, you may see minor shifts in skin tone or eye color depending on the lighting of the new environment. Operators must expect to perform minor “face swaps” or color corrections to finalize a series of assets. The tool gets you 90% of the way there, which is a massive leap from the 20% consistency seen in general-purpose models, but the final 10% still requires human oversight.

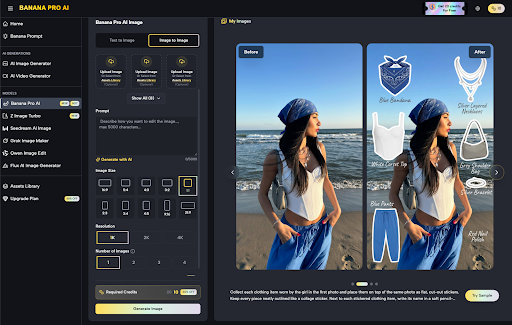

The Canvas Workflow as a Control Center

One of the more practical developments in this space is the shift from a linear prompt box to a canvas-based studio. When you work on a canvas, you aren’t just generating images in a vacuum; you are managing spatial relationships.

For character consistency, this means you can generate a character, isolate them, and then build the background around them using outpainting or specific image-to-image layers. This “inside-out” approach is significantly more reliable than trying to describe a character and a complex background in a single prompt. By using Nano Banana as the foundational engine within a canvas, teams can maintain the subject’s scale and positioning across a series of frames.

Moving from Static to Motion

The difficulty of maintaining identity doubles when you move from static images to video. In a video generation context, temporal consistency—ensuring the character doesn’t morph into someone else as they move—is the holy grail. Tools like Seedance 2.0, accessible through the Banana Pro interface, attempt to solve this by using the initial frame as a rigid guide.

The workflow typically involves:

- Generating the “Hero” image using a high-fidelity model like Seedream 5.0.

- Refining the character features in the editor to ensure they meet brand standards.

- Passing that specific image into the video generator as a structural reference.

It is worth noting an expectation-reset here: long-form video consistency is still a work in progress across the industry. While you can maintain a character for a 3-to-5-second clip, trying to generate a 30-second continuous shot where the character remains identical is currently prone to “hallucinations.” Most successful teams are building their narratives through short, high-consistency cuts rather than attempting long, unedited takes.

Structural Integrity and Subject Persistence

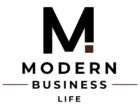

Beyond just the face, character consistency involves “subject persistence”—the way clothing, accessories, and even lighting interact with the person. If a character is wearing a specific patterned jacket, that pattern needs to exist in every shot.

Using the image-to-image capabilities of Banana AI, creators can “transfer” the style and costume of a character onto new poses. This is often more effective than re-typing the description of the clothes. By providing the model with a clear visual of the jacket, the AI understands the texture and color palette better than any text-based description could convey. This tactical use of reference images reduces the cognitive load on the prompt engineer and increases the reliability of the output.

The Role of Specialized Models: Z Image Turbo and Seedream 5.0

Different engines within the ecosystem serve different roles in the consistency pipeline.

- Z Image Turbo: Often used for rapid prototyping of scenes. If you need to quickly see how a character might look in fifty different locations, the speed of this model allows for high-volume testing before committing to a final render.

- Seedream 5.0: This is generally where the “heavy lifting” of character detail happens. It offers a higher density of pixels and a more nuanced understanding of anatomy, which is crucial for the primary hero assets that will serve as references for the rest of the project.

By mixing these models, a creative lead can manage resources effectively—using the faster models for background concepts and the high-fidelity models for character-critical frames.

Managing the Pipeline: A Practical Framework

For a team lead looking to implement these tools, the workflow should look something like this:

Phase 1: Character Design

Create a “Character Sheet” using a single model, ideally Nano Banana Pro, showing the character from the front, side, and three-quarters view. This sheet becomes the “source of truth” for all subsequent generations.

Phase 2: Environment Mapping

Generate the environments separately. This ensures the lighting and architectural style remain consistent without the character’s presence “polluting” the environmental prompt.

Phase 3: Integration

Use the canvas workflow to place the character from the sheet into the environment. This is where the AI Image Editor is used to mask, blend, and adjust shadows to make the character look native to the scene.

Phase 4: Audit and Refinement

Review the assets as a sequence. If a character’s hair looks too dark in a sunset scene compared to a midday scene, use the localized editing tools to pull the colors back into alignment. Consistency is an iterative process, not a “one-click” result.

Technical Limitations and Reality Checks

We must be realistic about the current state of AI-driven consistency. There is a “memory limit” to how much a model can carry over from a reference. If the pose is too extreme—for example, moving from a static portrait to an action shot of someone sprinting—the model may prioritize the physics of the movement over the precision of the facial features.

Furthermore, hardware and credit costs are a factor. High-consistency workflows, especially those involving multiple passes through an image-to-image engine, are more resource-intensive than simple text-to-image generation. Teams must weigh the cost of these extra passes against the manual labor time of a traditional designer. In most commercial cases, the AI workflow is still significantly faster, but it is not “free” in terms of time or compute.

The Future of Subject-Locked Content

As we look at the trajectory of tools like Banana Pro, the focus is clearly shifting toward granular control. The introduction of specific models like Nano Banana Pro suggests a future where we move away from global prompts and toward “weighted subjects.” Imagine a world where you can assign a 1.0 weight to a character’s face and a 0.5 weight to their clothing, allowing the environment to influence the outfit’s lighting while the face remains untouchable.

Until then, the most successful creative operations leads are those who treat AI as a sophisticated multi-tool rather than a magic wand. By combining the strengths of different models, using canvas-based positioning, and maintaining a healthy dose of skepticism regarding “zero-shot” consistency, teams can finally build repeatable, high-quality asset pipelines that meet professional standards.

The transition from “AI as a toy” to “AI as a production tool” is defined by this move toward consistency. Whether you are using Nano Banana for a single character or orchestrating an entire scene with Seedance 2.0, the goal remains the same: visual truth that holds up across every frame of the story. Consistent identity is the difference between a collection of cool images and a coherent brand narrative.