AI Music Generator often becomes relevant at the moment when a musical idea is clear in feeling but still missing in form. Many people know the mood they want, the scene they are scoring, or the emotional direction a lyric should carry, yet they cannot translate that instinct into melody, structure, instrumentation, and vocal delivery on demand. That gap is where tools like ToMusic start to matter. They do not remove taste from the process. They make taste usable earlier, before a rough idea is lost to delay, friction, or technical limitations.

What makes this shift interesting is not simply that a machine can generate music from words. It is that the interface is built around how many people already think. Instead of beginning with a DAW timeline, MIDI programming, or plugin chains, the process begins with description. In my observation, that changes the first creative decision from “how do I build this?” to “what should this feel like?” For many creators, that is a more natural place to start.

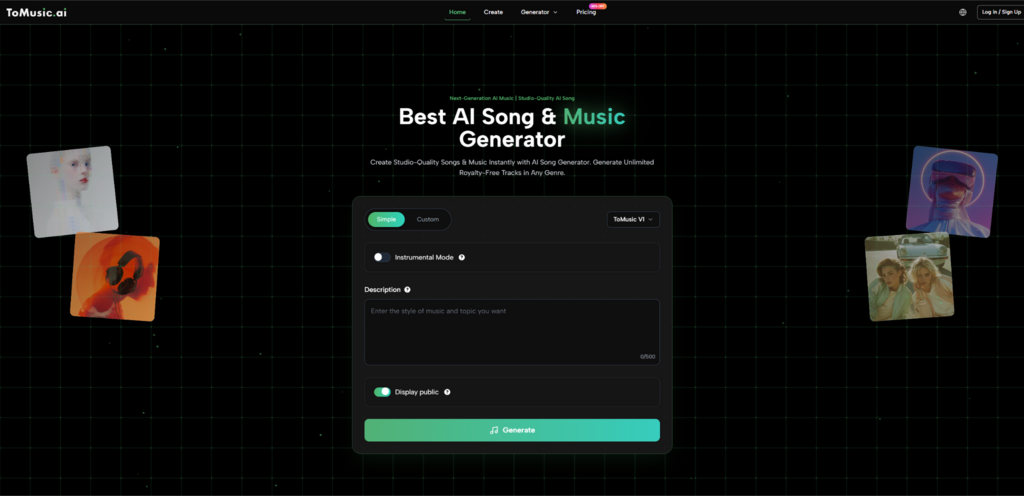

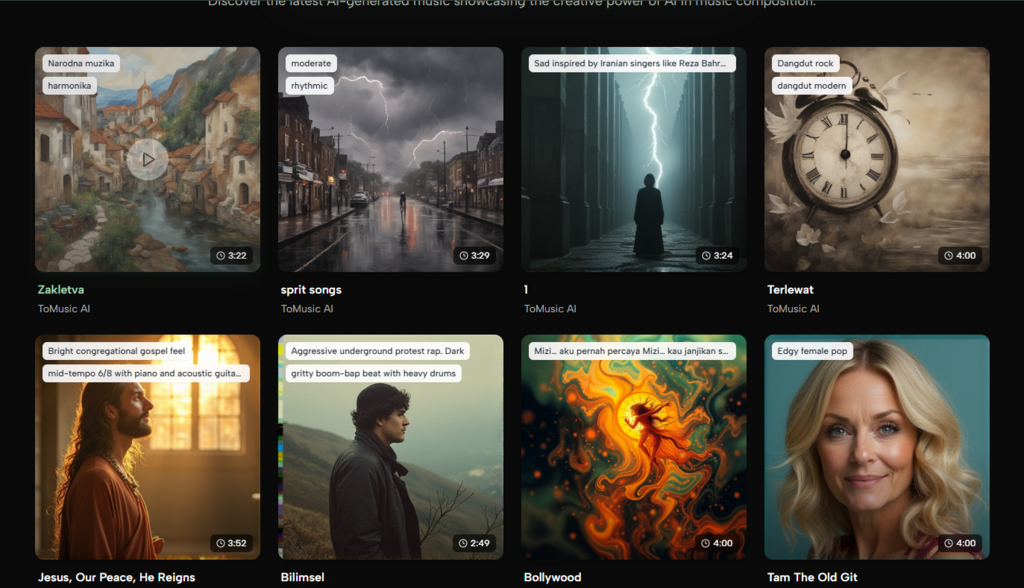

ToMusic is especially useful to examine because its public workflow is relatively easy to understand. The platform is centered on turning text prompts or lyrics into music, while also letting users shape style, mood, tempo, instrumentation, and voice-related preferences. It also separates model tiers, stores outputs in a personal library, and includes download and stem-related options. Those details matter because they show that the platform is not only chasing novelty. It is trying to build a repeatable music-making workflow around language.

Why Description Became A Practical Music Interface

Before tools like this, many people approached music creation through translation layers. A brand manager had to explain a concept to a producer. A filmmaker had to sort through stock tracks until one felt close enough. A songwriter with lyrics had to imagine the arrangement but still needed help turning words into a singable recording. In all of these cases, the problem was not a lack of intention. The problem was the distance between intention and execution.

ToMusic reduces part of that distance by accepting descriptive language as input. If a user wants something cinematic, restrained, warm, and slightly nostalgic, that description can function as a starting command rather than a vague note in a separate brief. This matters because creative work often fails in the handoff between what someone means and what the production process can capture.

How ToMusic Interprets Creative Intent Through Words

The official structure suggests that the system reads more than just literal subject matter. It appears designed to map words into musical attributes such as genre, mood, tempo, instrumentation, and vocal direction. That means a useful prompt is not only a sentence. It is a compact creative brief.

A prompt can imply pacing. It can imply density. It can imply whether the result should feel intimate or expansive. The user is therefore not writing poetry for the machine. The user is specifying interpretable musical constraints in ordinary language.

Why Mood Terms Often Carry Hidden Arrangement Signals

One reason text-driven music tools feel intuitive is that mood words are rarely only emotional. In practice, words such as hopeful, tense, dreamy, or triumphant usually imply arrangement choices. They hint at tempo range, harmonic tension, melodic contour, reverb depth, vocal delivery, and instrument weight.

That is why this kind of interface feels more practical than it first appears. The user may think they are only describing a vibe, but they are often providing enough information to guide several production decisions at once.

Why This Approach Helps Non Technical Creators

A large part of the platform’s appeal is that it lowers the entry barrier without making the process feel toy-like. Someone who has never built a session in music software can still express a useful request. At the same time, the output is not detached from craft. The user still needs judgment. They still need to decide whether the generated song actually fits the intended purpose.

That balance matters. Good creative tools do not merely make production easier. They make evaluation more central. When generation becomes fast, taste becomes the bottleneck. That is often a healthy shift.

How ToMusic Structures Generation Into A Usable Flow

What I find more interesting than the headline claim is the shape of the workflow behind it. The platform does not present generation as a single mysterious button. It frames the process through controllable inputs, model choices, and post-generation management.

Step One Build A Prompt Or Lyric Brief

The first step is to enter either a text prompt or lyrics. This is the platform’s real foundation. A user can describe mood, style, instrumentation, and other qualities directly, or can bring in complete lyrics and let the system generate a song around them.

This step works best when the user knows the function of the music. A soundtrack cue for a product demo, a reflective vocal piece for a personal project, and a catchy short-form social song may all use similar words but demand different pacing and energy. The better the brief, the more useful the first result tends to be.

Step Two Refine Musical Direction With Controls

The second step is refinement. ToMusic publicly emphasizes control over styles, tempo, mood, and voice characteristics. In practical terms, this means the platform is not asking users to rely on luck alone. It encourages them to specify what kind of music they want instead of accepting a generic output.

This is important because music generation can otherwise become a slot machine. A platform becomes more credible when it lets users guide the result in musically meaningful ways.

Why Controlled Inputs Matter More Than One Click Magic

One-click generation sounds appealing in a demo, but real work usually needs more than spectacle. A creator may need a stronger chorus feel, a softer vocal tone, or an arrangement that does not overpower spoken dialogue. Those requirements are hard to meet consistently without some control layer.

From that perspective, ToMusic’s design makes sense. It appears to treat music generation as an editable request, not as a random surprise generator.

Step Three Choose The Model That Fits The Job

The platform also distinguishes among multiple models, from V1 through V4. Public descriptions suggest that newer or higher-tier models are positioned around better vocals, richer harmonic behavior, deeper arrangement, or longer composition support.

That model separation matters because not every task has the same target. In my view, users benefit when a platform admits that one mode does not fit every need. A rough early sketch, a lyric-centered demo, and a more polished vocal song may deserve different generation strategies.

Step Four Review Download And Reuse The Result

Once a song is generated, it moves into the Music Library. This is one of the more practical parts of the system. The library stores tracks with metadata such as titles, tags, descriptions, lyrics, and generation parameters. Users can revisit earlier work, compare prompt variations, and download results in available formats.

That archive layer makes the platform feel closer to a production environment than a novelty app. A serious creator does not only need outputs. They need memory.

What Makes The Music Library More Important Than It Looks

A lot of AI products focus so heavily on generation that they neglect what happens afterward. ToMusic’s library matters because music creation is iterative. A person often does not remember the exact wording that produced the best result. Metadata preservation solves part of that problem.

Why Stored Parameters Improve Future Results

When each track keeps prompt, lyrics, tags, descriptions, and settings, the user can learn from earlier successes. This creates a feedback loop. Good outputs become references. Weak outputs become lessons. Over time, the creator is not merely generating more songs. They are building a better sense of how language maps to useful musical outcomes.

That is a subtle but important difference. The platform can gradually function as both generator and archive of creative experiments.

Why Cross Device Access Supports Real Workflows

The cloud-based library also reflects an understanding of how modern creators work. Ideas begin on phones, are reviewed on laptops, and are sometimes downloaded later for editing or client review. Persistent storage across devices makes that process less fragile.

This convenience is easy to underestimate, but it changes how casually people can capture musical ideas. An idea no longer needs to arrive during a formal studio session to become actionable.

How The Product Compares Across Practical Needs

| Need | How ToMusic Approaches It | Why It Matters |

| Starting from an idea | Uses text prompts or lyrics | Lowers the barrier to first draft creation |

| Shaping musical direction | Lets users define style, mood, tempo, and voice traits | Makes results feel less random |

| Matching task complexity | Offers multiple models with different strengths | Helps align generation to goal |

| Managing outputs | Saves tracks with full metadata in a library | Improves iteration and reuse |

| Exporting results | Supports downloadable output and stem-related tools | Makes generated music easier to adapt |

Where This Kind Of Tool Is Most Useful

The most obvious audience is people who have ideas but not full production skills. That includes solo creators, marketers, video editors, indie game makers, and lyric writers who need a faster route to sound.

For Songwriters Working From Words First

Some people write lyrics before they hear the arrangement. For them, Lyrics to Music AI is not only a novelty phrase. It points to a real change in sequence. The lyric no longer has to wait for a traditional session before it can become audible. A writer can test whether the words feel intimate, dramatic, sparse, or overproduced in different musical settings much earlier.

That does not mean the first output is automatically final. But it does mean the writer can hear structural possibilities sooner. In many creative processes, that is a major advantage.

For Content Teams Needing Fast Musical Direction

Another strong use case is content production. Short videos, explainers, product pages, and social media campaigns often need music that fits a very specific tone without consuming weeks of custom production time. A text-based generation workflow is well suited to these situations because the brief is already verbal. The same marketing language used to define campaign tone can guide the music request.

For Experimental Creators Testing Multiple Angles

There is also value in variation. If a creator wants to test one concept as electronic, acoustic, cinematic, or vocal-forward, a prompt-driven system can make those branches easier to explore. In my observation, this encourages broader ideation because the cost of trying another direction feels lower.

Where The Limits Still Matter

A credible reading of the platform has to include constraints. Music generation quality still depends heavily on prompt clarity. If the request is vague, the result may be serviceable but not distinctive. If the prompt is overloaded with competing instructions, the output may feel confused.

Why Human Judgment Remains The Deciding Layer

Even when a generated song sounds polished, the human still has to decide whether it actually fits the purpose. The platform can generate options, but it cannot fully define the emotional standard for a brand, a film scene, or a personal artistic statement. That decision remains human.

Why Iteration Is Part Of The Real Workflow

The public product structure also suggests that iteration is normal. Multiple models, stored parameters, and library review all imply that users may need more than one attempt. That is not a flaw unique to this product. It is part of how language-driven generation works. The goal is not always immediate perfection. Often the goal is faster convergence.

Why This Matters Beyond One Tool

The broader significance of ToMusic is not only that it generates songs. It is that it treats language as a serious interface for music production. That is a larger shift in how creative software can be organized.

For years, the dominant assumption was that creation had to begin inside specialized technical environments. Now, at least for some tasks, creation can begin in ordinary descriptive language and move toward refinement afterward. That changes who can participate, how quickly ideas can be tested, and what counts as a valid first draft.

ToMusic is interesting because its workflow makes that transition visible. You begin with words. You refine with musical controls. You choose a model based on the job. You keep the result in a persistent library. In that sense, the platform is not only generating tracks. It is proposing a different order of operations for music-making, one in which description comes first and technical polish follows after.